A Few Representative Examples of Experience in Robotics Research

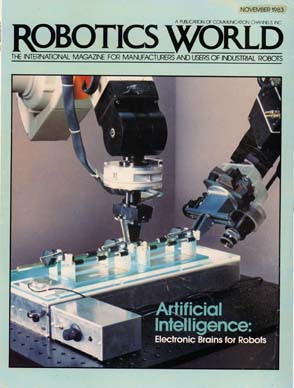

This picture is taken from the cover of Robotics World magazine, the November 1983 issue. It shows two robots putting together parts from a teletype assembly on a lighttable. The beige robot is outfitted with a 6 d.o.f. force/torque sensor. The pneumatic gripper fingers are specially modified to help with compliance. The robot is pressing down on a white rocker to snap it into place around the end of a roller. It feels the snap through the force sensor, and continues on with the next rocker. The assembly is being held by the black robot. It has a translational jaw, a remote-center-of-rotation passively compliant wrist mount, and a CCD camera mounted on its wrist for close-up inspection. Note the cutout triangles in the floor of the aluminum jig; this was designed to allow the vision system to locate the jig. The two robots, the hand camera, and a camera in the ceiling each had controlling computers on a LAN and were all part of an early distributed system; the controllers were coordinated asynchronously by a master controlling computer that ran the workcell's program. This work was co-performed with Randy Smith, and was performed while the president was a researcher/consultant at SRI International during the 1980's.

Here is a close-up of the hand camera visually acquiring rocker part positions and orientations, so the robot can pick them up. The rocker parts are white, against a black background; the system uses the SRI Vision Module, later produced by Machine Intelligence Corporation (MIC), to do image thresholding and blob analysis.

This diagram illustrates the main result from the president's 1981 thesis at Carnegie-Mellon, "A Supervisory Collision-Avoidance System for Robot Controllers". A full spatial virtual-reality model of a robot plus its surroundings is used to plan paths that avoid collisions. Although only the hand is shown here for clarity, the system works with the entire volume of a 6-jointed PUMA arm having rotational joints. The system consisted of three main layers. A spatial modeling system modeled where the arm was, along with obstacles and environmental objects to be manipulated. This modeling system layer was also augmented by a 3D graphics system, a user interface, and an animation system to drive the arm to selected poses and handle grasping and letting go of objects. On top of the modeling layer, a spatial collision-detection layer was implemented. This used a hierarchy of enclosing volumes to speed computation. Spheres, planes,cylinders, and blocks of any size and orientation were handled using special geometric formulae. This resulted in the ability to tell whether any parts of the arm (such as the wrist, the elbow, etc.) or the object the arm was holding were in collision with any parts of the environment, and if so, where.

The third layer used the second layer to perform path planning ("collision avoidance") from a known start to a known goal position. The system proposed different trajectories and then checked them out with the collision detection system. Based on the results, the system kept planning paths until it was able to find one that worked. This was then sent to the physical robot for execution.

The system did not run merely in simulation, but was used to drive an actual robot. Collision detection and avoidance was also performed with premodeled objects that the robot grasped. Making sure that the robot avoids collisions is not enough; the software has to perform avoidance with what the robot is holding as well. More than 20 years later, this system is still cutting-edge research.

Here is a robotic testbed built by JPL, the primary basic research branch of NASA. A satellite mockup is hung from the ceiling on a special constant-force spring that keeps it "floating". The two front robots are on rails that allow them to travel back and forth, while the rear robot employs twin cameras to get a wide stereo view of the situation. This physical installation was designed and built by the JPL researchers.

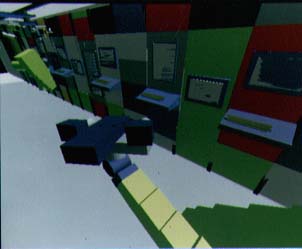

This virtual-reality model of the robot testbed was built for JPL for use in action planning. Like all of the other models, it incorporates full solid-to-solid collision detection. This research was performed around 1985.

This is a photo of the insides of a

Space Station mock-up used by NASA.

Here are two views of a virtual-reality simulation of the Space Station.

A ceiling track has been added for robots to move back and forth.

The second photo shows a robot's-eye view of another robot working.

Other robotics research involved such things as color vision, A.I. planning, expert system coding, sensor simulation, pattern recognition, range image processing, touch-sensor imaging, scheduling, interactive speech generation, and lots and lots of system integration.